To do the tracking we need a video and object position on the first frame.

To run the code you have to specify input (camera id or video_file). Then, select a bounding box with the mouse, and press any key to start tracking

#include <vector>

#include <iostream>

#include <iomanip>

#include "stats.h"

#include "utils.h"

const double akaze_thresh = 3e-4;

const double ransac_thresh = 2.5f;

const double nn_match_ratio = 0.8f;

const int bb_min_inliers = 100;

const int stats_update_period = 10;

namespace example {

{

public:

detector(_detector),

matcher(_matcher)

{}

void setFirstFrame(

const Mat frame, vector<Point2f> bb,

string title, Stats& stats);

Mat process(

const Mat frame, Stats& stats);

return detector;

}

protected:

Mat first_frame, first_desc;

vector<KeyPoint> first_kp;

vector<Point2f> object_bb;

};

void Tracker::setFirstFrame(

const Mat frame, vector<Point2f> bb,

string title, Stats& stats)

{

const Point* ptContain = { &ptMask[0] };

int iSize = static_cast<int>(bb.size());

for (size_t i=0; i<bb.size(); i++) {

ptMask[i].

x =

static_cast<int>(bb[i].x);

ptMask[i].

y =

static_cast<int>(bb[i].y);

}

first_frame = frame.

clone();

detector->detectAndCompute(first_frame, matMask, first_kp, first_desc);

stats.keypoints = (int)first_kp.size();

drawBoundingBox(first_frame, bb);

putText(first_frame, title,

Point(0, 60), FONT_HERSHEY_PLAIN, 5, Scalar::all(0), 4);

object_bb = bb;

delete[] ptMask;

}

Mat Tracker::process(

const Mat frame, Stats& stats)

{

vector<KeyPoint> kp;

detector->detectAndCompute(frame,

noArray(), kp, desc);

stats.keypoints = (int)kp.size();

vector< vector<DMatch> > matches;

vector<KeyPoint> matched1, matched2;

matcher->knnMatch(first_desc, desc, matches, 2);

for(unsigned i = 0; i < matches.size(); i++) {

if(matches[i][0].distance < nn_match_ratio * matches[i][1].distance) {

matched1.push_back(first_kp[matches[i][0].queryIdx]);

matched2.push_back( kp[matches[i][0].trainIdx]);

}

}

stats.matches = (int)matched1.size();

Mat inlier_mask, homography;

vector<KeyPoint> inliers1, inliers2;

vector<DMatch> inlier_matches;

if(matched1.size() >= 4) {

RANSAC, ransac_thresh, inlier_mask);

}

if(matched1.size() < 4 || homography.

empty()) {

stats.inliers = 0;

stats.ratio = 0;

return res;

}

for(unsigned i = 0; i < matched1.size(); i++) {

int new_i = static_cast<int>(inliers1.size());

inliers1.push_back(matched1[i]);

inliers2.push_back(matched2[i]);

inlier_matches.push_back(

DMatch(new_i, new_i, 0));

}

}

stats.inliers = (int)inliers1.size();

stats.ratio = stats.inliers * 1.0 / stats.matches;

vector<Point2f> new_bb;

if(stats.inliers >= bb_min_inliers) {

drawBoundingBox(frame_with_bb, new_bb);

}

drawMatches(first_frame, inliers1, frame_with_bb, inliers2,

inlier_matches, res,

return res;

}

}

int main(

int argc,

char **argv)

{

CommandLineParser parser(argc, argv,

"{@input_path |0|input path can be a camera id, like 0,1,2 or a video filename}");

parser.printMessage();

string input_path = parser.get<string>(0);

string video_name = input_path;

if ( ( isdigit(input_path[0]) && input_path.size() == 1 ) )

{

int camera_no = input_path[0] - '0';

video_in.

open( camera_no );

}

else {

video_in.

open(video_name);

}

cerr << "Couldn't open " << video_name << endl;

return 1;

}

Stats stats, akaze_stats, orb_stats;

akaze->setThreshold(akaze_thresh);

example::Tracker akaze_tracker(akaze, matcher);

example::Tracker orb_tracker(orb, matcher);

cout << "\nPress any key to stop the video and select a bounding box" << endl;

{

video_in >> frame;

}

vector<Point2f> bb;

bb.push_back(

cv::Point2f(

static_cast<float>(uBox.

x),

static_cast<float>(uBox.

y)));

bb.push_back(

cv::Point2f(

static_cast<float>(uBox.

x+uBox.

width),

static_cast<float>(uBox.

y)));

akaze_tracker.setFirstFrame(frame, bb, "AKAZE", stats);

orb_tracker.setFirstFrame(frame, bb, "ORB", stats);

Stats akaze_draw_stats, orb_draw_stats;

Mat akaze_res, orb_res, res_frame;

int i = 0;

for(;;) {

i++;

bool update_stats = (i % stats_update_period == 0);

video_in >> frame;

if(frame.empty()) break;

akaze_res = akaze_tracker.process(frame, stats);

akaze_stats += stats;

if(update_stats) {

akaze_draw_stats = stats;

}

orb->setMaxFeatures(stats.keypoints);

orb_res = orb_tracker.process(frame, stats);

orb_stats += stats;

if(update_stats) {

orb_draw_stats = stats;

}

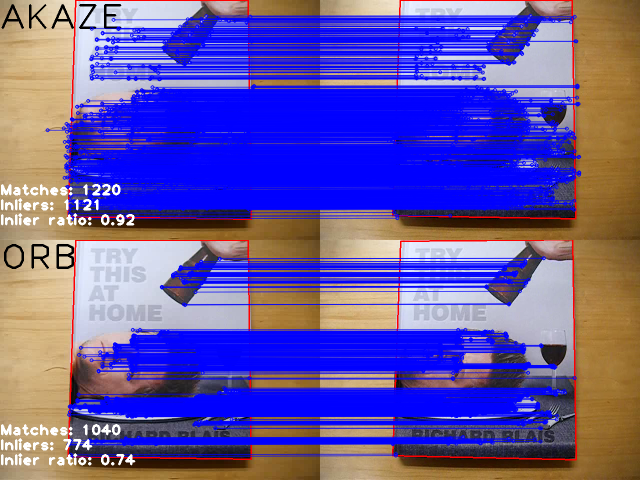

drawStatistics(akaze_res, akaze_draw_stats);

drawStatistics(orb_res, orb_draw_stats);

vconcat(akaze_res, orb_res, res_frame);

}

akaze_stats /= i - 1;

orb_stats /= i - 1;

printStatistics("AKAZE", akaze_stats);

printStatistics("ORB", orb_stats);

return 0;

}

Designed for command line parsing.

Definition utility.hpp:890

Class for matching keypoint descriptors.

Definition types.hpp:849

n-dimensional dense array class

Definition mat.hpp:840

CV_NODISCARD_STD Mat clone() const

Creates a full copy of the array and the underlying data.

MatSize size

Definition mat.hpp:2226

static CV_NODISCARD_STD MatExpr zeros(int rows, int cols, int type)

Returns a zero array of the specified size and type.

_Tp & at(int i0=0)

Returns a reference to the specified array element.

bool empty() const

Returns true if the array has no elements.

_Tp y

y coordinate of the point

Definition types.hpp:202

_Tp x

x coordinate of the point

Definition types.hpp:201

Template class for 2D rectangles.

Definition types.hpp:444

_Tp x

x coordinate of the top-left corner

Definition types.hpp:487

_Tp y

y coordinate of the top-left corner

Definition types.hpp:488

_Tp width

width of the rectangle

Definition types.hpp:489

_Tp height

height of the rectangle

Definition types.hpp:490

static Scalar_< double > all(double v0)

a Class to measure passing time.

Definition utility.hpp:326

void start()

starts counting ticks.

Definition utility.hpp:335

double getTimeSec() const

returns passed time in seconds.

Definition utility.hpp:371

void stop()

stops counting ticks.

Definition utility.hpp:341

Base abstract class for the long-term tracker.

Definition tracking.hpp:810

Class for video capturing from video files, image sequences or cameras.

Definition videoio.hpp:786

virtual bool open(const String &filename, int apiPreference=CAP_ANY)

Opens a video file or a capturing device or an IP video stream for video capturing.

virtual bool isOpened() const

Returns true if video capturing has been initialized already.

Mat findHomography(InputArray srcPoints, InputArray dstPoints, int method=0, double ransacReprojThreshold=3, OutputArray mask=noArray(), const int maxIters=2000, const double confidence=0.995)

Finds a perspective transformation between two planes.

void vconcat(const Mat *src, size_t nsrc, OutputArray dst)

Applies vertical concatenation to given matrices.

void perspectiveTransform(InputArray src, OutputArray dst, InputArray m)

Performs the perspective matrix transformation of vectors.

void hconcat(const Mat *src, size_t nsrc, OutputArray dst)

Applies horizontal concatenation to given matrices.

std::shared_ptr< _Tp > Ptr

Definition cvstd_wrapper.hpp:23

InputOutputArray noArray()

Returns an empty InputArray or OutputArray.

unsigned char uchar

Definition interface.h:51

#define CV_8UC1

Definition interface.h:88

void drawMatches(InputArray img1, const std::vector< KeyPoint > &keypoints1, InputArray img2, const std::vector< KeyPoint > &keypoints2, const std::vector< DMatch > &matches1to2, InputOutputArray outImg, const Scalar &matchColor=Scalar::all(-1), const Scalar &singlePointColor=Scalar::all(-1), const std::vector< char > &matchesMask=std::vector< char >(), DrawMatchesFlags flags=DrawMatchesFlags::DEFAULT)

Draws the found matches of keypoints from two images.

void imshow(const String &winname, InputArray mat)

Displays an image in the specified window.

int waitKey(int delay=0)

Waits for a pressed key.

void namedWindow(const String &winname, int flags=WINDOW_AUTOSIZE)

Creates a window.

void resizeWindow(const String &winname, int width, int height)

Resizes the window to the specified size.

Rect selectROI(const String &windowName, InputArray img, bool showCrosshair=true, bool fromCenter=false, bool printNotice=true)

Allows users to select a ROI on the given image.

void fillPoly(InputOutputArray img, InputArrayOfArrays pts, const Scalar &color, int lineType=LINE_8, int shift=0, Point offset=Point())

Fills the area bounded by one or more polygons.

void putText(InputOutputArray img, const String &text, Point org, int fontFace, double fontScale, Scalar color, int thickness=1, int lineType=LINE_8, bool bottomLeftOrigin=false)

Draws a text string.

int main(int argc, char *argv[])

Definition highgui_qt.cpp:3

This class implements algorithm described abobve using given feature detector and descriptor matcher.