|

OpenCV 4.14.0-pre

Open Source Computer Vision

|

|

OpenCV 4.14.0-pre

Open Source Computer Vision

|

Prev Tutorial: Back Projection

Next Tutorial: Finding contours in your image

| Original author | Ana Huamán |

| Compatibility | OpenCV >= 3.0 |

In this tutorial you will learn how to:

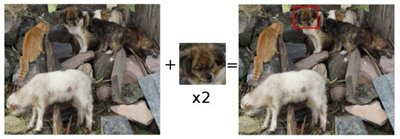

Template matching is a technique for finding areas of an image that match (are similar) to a template image (patch).

While the patch must be a rectangle it may be that not all of the rectangle is relevant. In such a case, a mask can be used to isolate the portion of the patch that should be used to find the match.

We need two primary components:

our goal is to detect the highest matching area:

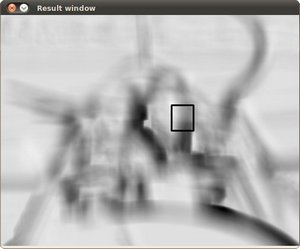

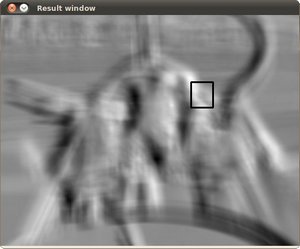

the image above is the result R of sliding the patch with a metric TM_CCORR_NORMED. The brightest locations indicate the highest matches. As you can see, the location marked by the red circle is probably the one with the highest value, so that location (the rectangle formed by that point as a corner and width and height equal to the patch image) is considered the match.

Good question. OpenCV implements Template matching in the function matchTemplate(). The available methods are 6:

method=TM_SQDIFF

\[R(x,y)= \sum _{x',y'} (T(x',y')-I(x+x',y+y'))^2\]

method=TM_SQDIFF_NORMED

\[R(x,y)= \frac{\sum_{x',y'} (T(x',y')-I(x+x',y+y'))^2}{\sqrt{\sum_{x',y'}T(x',y')^2 \cdot \sum_{x',y'} I(x+x',y+y')^2}}\]

method=TM_CCORR

\[R(x,y)= \sum _{x',y'} (T(x',y') \cdot I(x+x',y+y'))\]

method=TM_CCORR_NORMED

\[R(x,y)= \frac{\sum_{x',y'} (T(x',y') \cdot I(x+x',y+y'))}{\sqrt{\sum_{x',y'}T(x',y')^2 \cdot \sum_{x',y'} I(x+x',y+y')^2}}\]

method=TM_CCOEFF

\[R(x,y)= \sum _{x',y'} (T'(x',y') \cdot I'(x+x',y+y'))\]

where

\[\begin{array}{l} T'(x',y')=T(x',y') - 1/(w \cdot h) \cdot \sum _{x'',y''} T(x'',y'') \\ I'(x+x',y+y')=I(x+x',y+y') - 1/(w \cdot h) \cdot \sum _{x'',y''} I(x+x'',y+y'') \end{array}\]

method=TM_CCOEFF_NORMED

\[R(x,y)= \frac{ \sum_{x',y'} (T'(x',y') \cdot I'(x+x',y+y')) }{ \sqrt{\sum_{x',y'}T'(x',y')^2 \cdot \sum_{x',y'} I'(x+x',y+y')^2} }\]

Declare some global variables, such as the image, template and result matrices, as well as the match method and the window names:

Load the source image, template, and optionally, if supported for the matching method, a mask:

Create the Trackbar to enter the kind of matching method to be used. When a change is detected the callback function is called.

Let's check out the callback function. First, it makes a copy of the source image:

Perform the template matching operation. The arguments are naturally the input image I, the template T, the result R and the match_method (given by the Trackbar), and optionally the mask image M.

We normalize the results:

We localize the minimum and maximum values in the result matrix R by using minMaxLoc().

For the first two methods ( TM_SQDIFF and MT_SQDIFF_NORMED ) the best match are the lowest values. For all the others, higher values represent better matches. So, we save the corresponding value in the matchLoc variable:

Display the source image and the result matrix. Draw a rectangle around the highest possible matching area:

and a template image: