|

OpenCV

4.9.0-dev

Open Source Computer Vision

|

|

OpenCV

4.9.0-dev

Open Source Computer Vision

|

Prev Tutorial: Using Kinect and other OpenNI compatible depth sensors

Next Tutorial: Using Creative Senz3D and other Intel RealSense SDK compatible depth sensors

This tutorial is devoted to the Astra Series of Orbbec 3D cameras (https://orbbec3d.com/index/Product/info.html?cate=38&id=36). That cameras have a depth sensor in addition to a common color sensor. The depth sensors can be read using the open source OpenNI API with cv::VideoCapture class. The video stream is provided through the regular camera interface.

In order to use the Astra camera's depth sensor with OpenCV you should do the following steps:

For instance, if you use 64bit GNU/Linux run:

When you are done with the installation, make sure to replug your device for udev rules to take effect. The camera should now work as a general camera device. Note that your current user should belong to group video to have access to the camera. Also, make sure to source OpenNIDevEnvironment file:

To verify that the source command works and OpenNI library and header files can be found, run the following command and you should see something similar in your terminal:

If the above two variables are empty, then you need to source OpenNIDevEnvironment again.

install.sh any more. You can use the following script to initialize environment: WITH_OPENNI2 flag in CMake. You may also like to enable the BUILD_EXAMPLES flag to get a code sample working with your Astra camera. Run the following commands in the directory containing OpenCV source code to enable OpenNI support: The Astra Pro camera has two sensors – a depth sensor and a color sensor. The depth sensor can be read using the OpenNI interface with cv::VideoCapture class. The video stream is not available through OpenNI API and is only provided via the regular camera interface. So, to get both depth and color frames, two cv::VideoCapture objects should be created:

The first object will use the OpenNI2 API to retrieve depth data. The second one uses the Video4Linux2 interface to access the color sensor. Note that the example above assumes that the Astra camera is the first camera in the system. If you have more than one camera connected, you may need to explicitly set the proper camera number.

Before using the created VideoCapture objects you may want to set up stream parameters by setting objects' properties. The most important parameters are frame width, frame height and fps. For this example, we’ll configure width and height of both streams to VGA resolution, which is the maximum resolution available for both sensors, and we’d like both stream parameters to be the same for easier color-to-depth data registration:

For setting and retrieving some property of sensor data generators use cv::VideoCapture::set and cv::VideoCapture::get methods respectively, e.g. :

The following properties of cameras available through OpenNI interface are supported for the depth generator:

cv::CAP_PROP_OPENNI2_MIRROR – Flag to enable or disable mirroring for this stream. Set to 0 to disable mirroring

Next properties are available for getting only:

After the VideoCapture objects have been set up, you can start reading frames from them.

As there are two video sources that should be read simultaneously, it’s necessary to create two threads to avoid blocking. Example implementation that gets frames from each sensor in a new thread and stores them in a list along with their timestamps:

VideoCapture can retrieve the following data:

When new data are available, each reading thread notifies the main thread using a condition variable. A frame is stored in the ordered list – the first frame in the list is the earliest captured, the last frame is the latest captured. As depth and color frames are read from independent sources two video streams may become out of sync even when both streams are set up for the same frame rate. A post-synchronization procedure can be applied to the streams to combine depth and color frames into pairs. The sample code below demonstrates this procedure:

In the code snippet above the execution is blocked until there are some frames in both frame lists. When there are new frames, their timestamps are being checked – if they differ more than a half of the frame period then one of the frames is dropped. If timestamps are close enough, then two frames are paired. Now, we have two frames: one containing color information and another one – depth information. In the example above retrieved frames are simply shown with cv::imshow function, but you can insert any other processing code here.

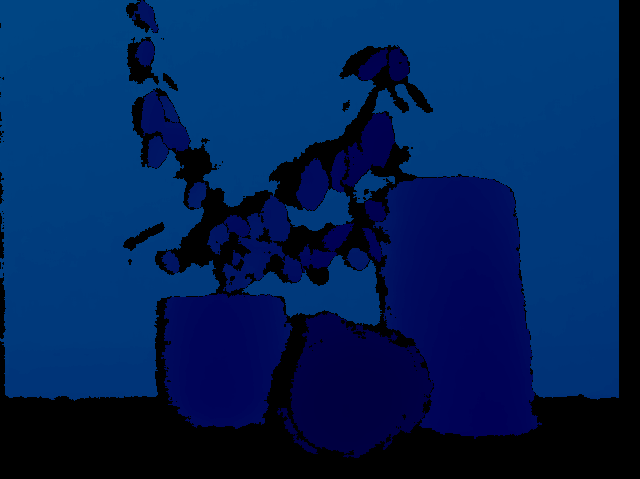

In the sample images below you can see the color frame and the depth frame representing the same scene. Looking at the color frame it's hard to distinguish plant leaves from leaves painted on a wall, but the depth data makes it easy.

The complete implementation can be found in orbbec_astra.cpp in samples/cpp/tutorial_code/videoio directory.

1.9.8

1.9.8