Goal

In this tutorial you will learn how to use the GrayCodePattern class to:

- Decode a previously acquired Gray code pattern.

- Generate a disparity map.

- Generate a pointcloud.

Code

#include <iostream>

#include <opencv2/opencv_modules.hpp>

#ifdef HAVE_OPENCV_VIZ

#endif

static const char* keys =

{ "{@images_list | | Image list where the captured pattern images are saved}"

"{@calib_param_path | | Calibration_parameters }"

"{@proj_width | | The projector width used to acquire the pattern }"

"{@proj_height | | The projector height used to acquire the pattern}"

"{@white_thresh | | The white threshold height (optional)}"

"{@black_thresh | | The black threshold (optional)}" };

static void help()

{

cout << "\nThis example shows how to use the \"Structured Light module\" to decode a previously acquired gray code pattern, generating a pointcloud"

"\nCall:\n"

"./example_structured_light_pointcloud <images_list> <calib_param_path> <proj_width> <proj_height> <white_thresh> <black_thresh>\n"

<< endl;

}

static bool readStringList( const string& filename, vector<string>& l )

{

l.resize( 0 );

{

cerr << "failed to open " << filename << endl;

return false;

}

if( n.

type() != FileNode::SEQ )

{

cerr << "cam 1 images are not a sequence! FAIL" << endl;

return false;

}

for( ; it != it_end; ++it )

{

l.push_back( ( string ) *it );

}

n = fs["cam2"];

if( n.

type() != FileNode::SEQ )

{

cerr << "cam 2 images are not a sequence! FAIL" << endl;

return false;

}

for( ; it != it_end; ++it )

{

l.push_back( ( string ) *it );

}

if( l.size() % 2 != 0 )

{

cout << "Error: the image list contains odd (non-even) number of elements\n";

return false;

}

return true;

}

int main( int argc, char** argv )

{

params.

width = parser.get<

int>( 2 );

params.

height = parser.get<

int>( 3 );

if( images_file.empty() || calib_file.empty() || params.

width < 1 || params.

height < 1 || argc < 5 || argc > 7 )

{

help();

return -1;

}

size_t white_thresh = 0;

size_t black_thresh = 0;

if( argc == 7 )

{

white_thresh = parser.get<unsigned>( 4 );

black_thresh = parser.get<unsigned>( 5 );

graycode->setWhiteThreshold( white_thresh );

graycode->setBlackThreshold( black_thresh );

}

vector<string> imagelist;

bool ok = readStringList( images_file, imagelist );

if( !ok || imagelist.empty() )

{

cout << "can not open " << images_file << " or the string list is empty" << endl;

help();

return -1;

}

{

cout << "Failed to open Calibration Data File." << endl;

help();

return -1;

}

Mat cam1intrinsics, cam1distCoeffs, cam2intrinsics, cam2distCoeffs, R, T;

fs["cam1_intrinsics"] >> cam1intrinsics;

fs["cam2_intrinsics"] >> cam2intrinsics;

fs["cam1_distorsion"] >> cam1distCoeffs;

fs["cam2_distorsion"] >> cam2distCoeffs;

fs["R"] >> R;

fs["T"] >> T;

cout << "cam1intrinsics" << endl << cam1intrinsics << endl;

cout << "cam1distCoeffs" << endl << cam1distCoeffs << endl;

cout << "cam2intrinsics" << endl << cam2intrinsics << endl;

cout << "cam2distCoeffs" << endl << cam2distCoeffs << endl;

cout << "T" << endl << T << endl << "R" << endl << R << endl;

if( (!R.data) || (!T.data) || (!cam1intrinsics.data) || (!cam2intrinsics.data) || (!cam1distCoeffs.data) || (!cam2distCoeffs.data) )

{

cout << "Failed to load cameras calibration parameters" << endl;

help();

return -1;

}

size_t numberOfPatternImages = graycode->getNumberOfPatternImages();

vector<vector<Mat> > captured_pattern;

captured_pattern.resize( 2 );

captured_pattern[0].resize( numberOfPatternImages );

captured_pattern[1].resize( numberOfPatternImages );

cout << "Rectifying images..." << endl;

stereoRectify( cam1intrinsics, cam1distCoeffs, cam2intrinsics, cam2distCoeffs, imagesSize, R, T, R1, R2, P1, P2, Q, 0,

-1, imagesSize, &validRoi[0], &validRoi[1] );

Mat map1x, map1y, map2x, map2y;

for( size_t i = 0; i < numberOfPatternImages; i++ )

{

if( (!captured_pattern[0][i].data) || (!captured_pattern[1][i].data) )

{

cout << "Empty images" << endl;

help();

return -1;

}

}

cout << "done" << endl;

vector<Mat> blackImages;

vector<Mat> whiteImages;

blackImages.resize( 2 );

whiteImages.resize( 2 );

cout << endl << "Decoding pattern ..." << endl;

bool decoded = graycode->decode( captured_pattern, disparityMap, blackImages, whiteImages,

if( decoded )

{

cout << endl << "pattern decoded" << endl;

Mat cm_disp, scaledDisparityMap;

cout << "disp min " << min << endl << "disp max " << max << endl;

imshow(

"cm disparity m", cm_disp );

Mat dst, thresholded_disp;

imshow(

"threshold disp otsu", dst );

#ifdef HAVE_OPENCV_VIZ

Mat pointcloud_tresh, color_tresh;

pointcloud.

copyTo( pointcloud_tresh, thresholded_disp );

color.

copyTo( color_tresh, thresholded_disp );

myWindow.setBackgroundMeshLab();

myWindow.showWidget(

"pointcloud",

viz::WCloud( pointcloud_tresh, color_tresh ) );

myWindow.showWidget(

"text2d",

viz::WText(

"Point cloud",

Point(20, 20), 20, viz::Color::green() ) );

myWindow.spin();

#endif // HAVE_OPENCV_VIZ

}

return 0;

}

Explanation

First of all the needed parameters must be passed to the program. The first is the name list of previously acquired pattern images, stored in a .yaml file organized as below:

%YAML:1.0

cam1:

- "/data/pattern_cam1_im1.png"

- "/data/pattern_cam1_im2.png"

..............

- "/data/pattern_cam1_im42.png"

- "/data/pattern_cam1_im43.png"

- "/data/pattern_cam1_im44.png"

cam2:

- "/data/pattern_cam2_im1.png"

- "/data/pattern_cam2_im2.png"

..............

- "/data/pattern_cam2_im42.png"

- "/data/pattern_cam2_im43.png"

- "/data/pattern_cam2_im44.png"

For example, the dataset used for this tutorial has been acquired using a projector with a resolution of 1280x800, so 42 pattern images (from number 1 to 42) + 1 white (number 43) and 1 black (number 44) were captured with both the two cameras.

Then the cameras calibration parameters, stored in another .yml file, together with the width and the height of the projector used to project the pattern, and, optionally, the values of white and black tresholds, must be passed to the tutorial program.

In this way, GrayCodePattern class parameters can be set up with the width and the height of the projector used during the pattern acquisition and a pointer to a GrayCodePattern object can be created:

....

params.width = parser.get<int>( 2 );

params.height = parser.get<int>( 3 );

....

Ptr<structured_light::GrayCodePattern> graycode = structured_light::GrayCodePattern::create( params );

If the white and black thresholds are passed as parameters (these thresholds influence the number of decoded pixels), their values can be set, otherwise the algorithm will use the default values.

size_t white_thresh = 0;

size_t black_thresh = 0;

if( argc == 7 )

{

white_thresh = parser.get<size_t>( 4 );

black_thresh = parser.get<size_t>( 5 );

graycode->setWhiteThreshold( white_thresh );

graycode->setBlackThreshold( black_thresh );

}

At this point, to use the decode method of GrayCodePattern class, the acquired pattern images must be stored in a vector of vector of Mat. The external vector has a size of two because two are the cameras: the first vector stores the pattern images captured from the left camera, the second those acquired from the right one. The number of pattern images is obviously the same for both cameras and can be retrieved using the getNumberOfPatternImages() method:

size_t numberOfPatternImages = graycode->getNumberOfPatternImages();

vector<vector<Mat> > captured_pattern;

captured_pattern.resize( 2 );

captured_pattern[0].resize( numberOfPatternImages );

captured_pattern[1].resize( numberOfPatternImages );

.....

for( size_t i = 0; i < numberOfPatternImages; i++ )

{

......

}

As regards the black and white images, they must be stored in two different vectors of Mat:

vector<Mat> blackImages;

vector<Mat> whiteImages;

blackImages.resize( 2 );

whiteImages.resize( 2 );

It is important to underline that all the images, the pattern ones, black and white, must be loaded as grayscale images and rectified before being passed to decode method:

cout << "Rectifying images..." << endl;

Mat R1, R2, P1, P2, Q;

stereoRectify( cam1intrinsics, cam1distCoeffs, cam2intrinsics, cam2distCoeffs, imagesSize, R, T, R1, R2, P1, P2, Q, 0,

-1, imagesSize, &validRoi[0], &validRoi[1] );

Mat map1x, map1y, map2x, map2y;

........

for( size_t i = 0; i < numberOfPatternImages; i++ )

{

........

}

........

In this way the decode method can be called to decode the pattern and to generate the corresponding disparity map, computed on the first camera (left):

Mat disparityMap;

bool decoded = graycode->decode(captured_pattern, disparityMap, blackImages, whiteImages,

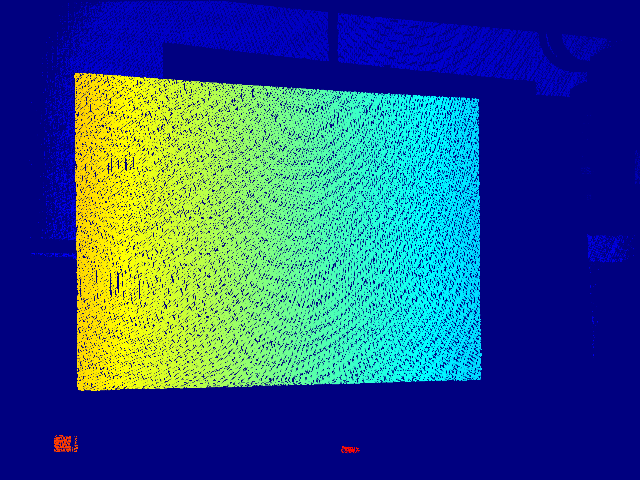

To better visualize the result, a colormap is applied to the computed disparity:

Mat cm_disp, scaledDisparityMap;

cout << "disp min " << min << endl << "disp max " << max << endl;

imshow(

"cm disparity m", cm_disp )

At this point the point cloud can be generated using the reprojectImageTo3D method, taking care to convert the computed disparity in a CV_32FC1 Mat (decode method computes a CV_64FC1 disparity map):

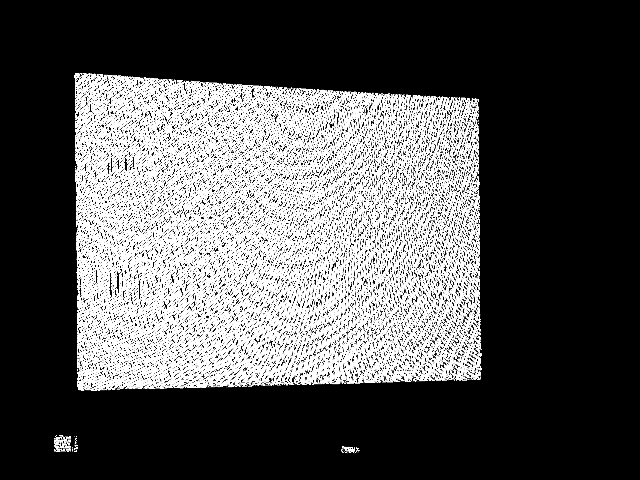

Then a mask to remove the unwanted background is computed:

Mat dst, thresholded_disp;

resize( thresholded_disp, dst,

Size( 640, 480 ) );

imshow(

"threshold disp otsu", dst );

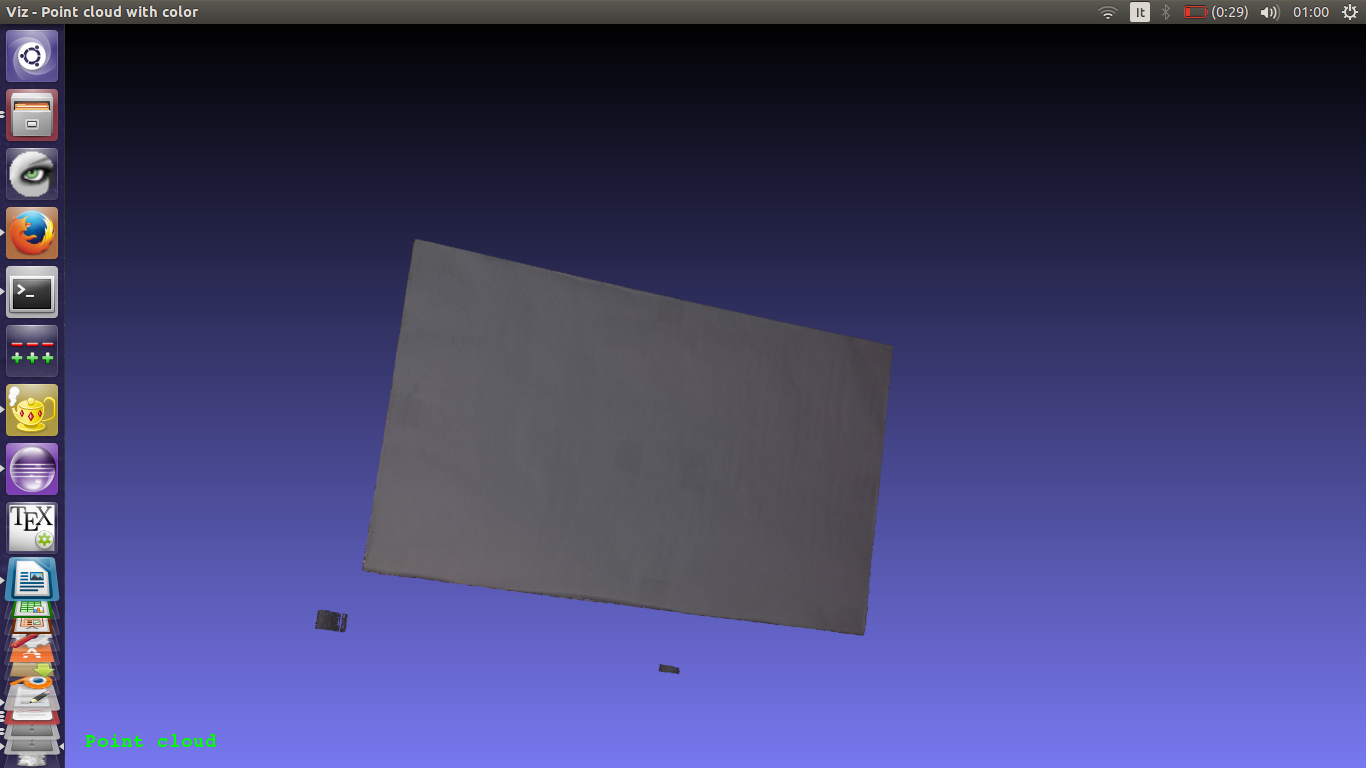

The white image of cam1 was previously loaded also as a color image, in order to map the color of the object on its reconstructed pointcloud:

The background renoval mask is thus applied to the point cloud and to the color image:

Mat pointcloud_tresh, color_tresh;

pointcloud.

copyTo(pointcloud_tresh, thresholded_disp);

color.copyTo(color_tresh, thresholded_disp);

Finally the computed point cloud of the scanned object can be visualized on viz:

viz::Viz3d myWindow( "Point cloud with color");

myWindow.setBackgroundMeshLab();

myWindow.showWidget( "coosys", viz::WCoordinateSystem());

myWindow.showWidget( "pointcloud", viz::WCloud( pointcloud_tresh, color_tresh ) );

myWindow.showWidget(

"text2d", viz::WText(

"Point cloud",

Point(20, 20), 20, viz::Color::green() ) );

myWindow.spin();

1.8.13

1.8.13