In this text you will learn how to use opencv_dnn module using yolo_object_detection (Sample of using OpenCV dnn module in real time with device capture, video and image).

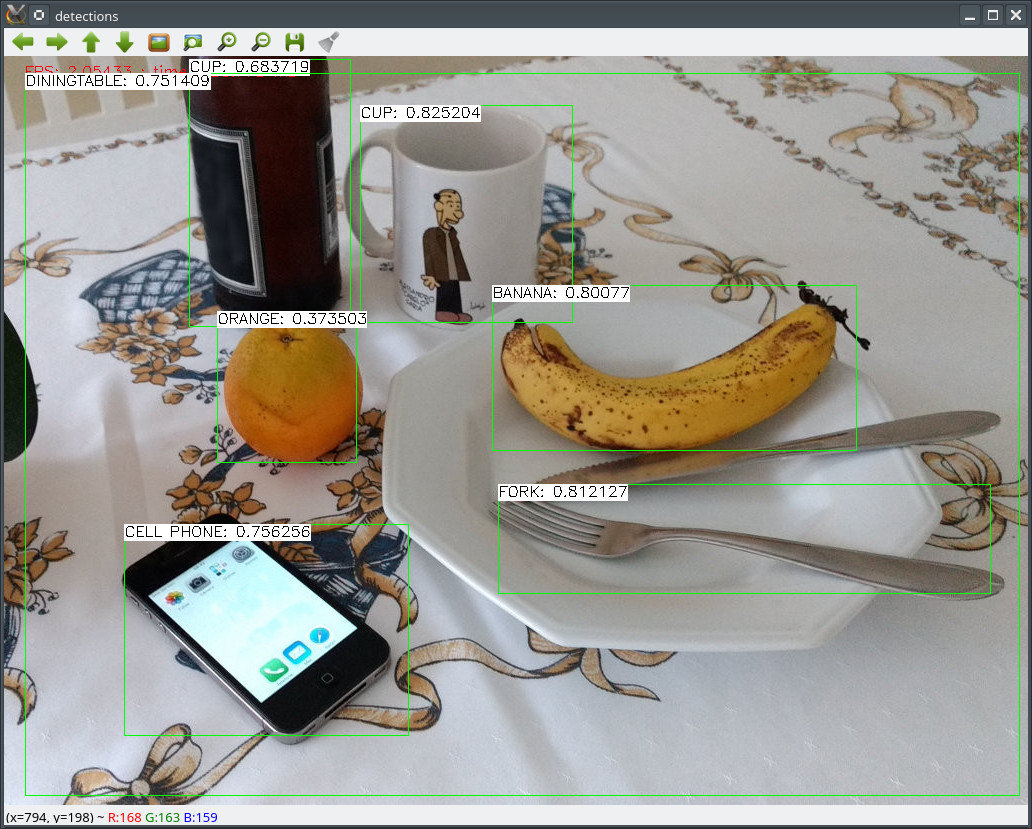

We will demonstrate results of this example on the following picture.

#include <fstream>

#include <iostream>

static const char* about =

"This sample uses You only look once (YOLO)-Detector (https://arxiv.org/abs/1612.08242) to detect objects on camera/video/image.\n"

"Models can be downloaded here: https://pjreddie.com/darknet/yolo/\n"

"Default network is 416x416.\n"

"Class names can be downloaded here: https://github.com/pjreddie/darknet/tree/master/data\n";

static const char* params =

"{ help | false | print usage }"

"{ cfg | | model configuration }"

"{ model | | model weights }"

"{ camera_device | 0 | camera device number}"

"{ source | | video or image for detection}"

"{ out | | path to output video file}"

"{ fps | 3 | frame per second }"

"{ style | box | box or line style draw }"

"{ min_confidence | 0.24 | min confidence }"

"{ class_names | | File with class names, [PATH-TO-DARKNET]/data/coco.names }";

int main(int argc, char** argv)

{

if (parser.get<bool>("help"))

{

cout << about << endl;

parser.printMessage();

return 0;

}

if (net.empty())

{

cerr << "Can't load network by using the following files: " << endl;

cerr << "cfg-file: " << modelConfiguration << endl;

cerr << "weights-file: " << modelBinary << endl;

cerr << "Models can be downloaded here:" << endl;

cerr << "https://pjreddie.com/darknet/yolo/" << endl;

exit(-1);

}

double fps = parser.get<float>("fps");

{

int cameraDevice = parser.get<int>("camera_device");

if(!cap.isOpened())

{

cout << "Couldn't find camera: " << cameraDevice << endl;

return -1;

}

}

else

{

cap.open(parser.get<

String>(

"source"));

if(!cap.isOpened())

{

cout <<

"Couldn't open image or video: " << parser.get<

String>(

"video") << endl;

return -1;

}

}

{

}

vector<String> classNamesVec;

ifstream classNamesFile(parser.get<

String>(

"class_names").

c_str());

if (classNamesFile.is_open())

{

string className = "";

while (std::getline(classNamesFile, className))

classNamesVec.push_back(className);

}

for(;;)

{

cap >> frame;

if (frame.empty())

{

break;

}

if (frame.channels() == 4)

net.setInput(inputBlob, "data");

Mat detectionMat = net.forward(

"detection_out");

vector<double> layersTimings;

double time_ms = net.getPerfProfile(layersTimings) / tick_freq * 1000;

putText(frame, format(

"FPS: %.2f ; time: %.2f ms", 1000.f / time_ms, time_ms),

float confidenceThreshold = parser.get<float>("min_confidence");

for (

int i = 0; i < detectionMat.

rows; i++)

{

const int probability_index = 5;

const int probability_size = detectionMat.

cols - probability_index;

float *prob_array_ptr = &detectionMat.

at<

float>(i, probability_index);

size_t objectClass = max_element(prob_array_ptr, prob_array_ptr + probability_size) - prob_array_ptr;

float confidence = detectionMat.

at<

float>(i, (int)objectClass + probability_index);

if (confidence > confidenceThreshold)

{

float x_center = detectionMat.

at<

float>(i, 0) * frame.cols;

float y_center = detectionMat.

at<

float>(i, 1) * frame.rows;

float width = detectionMat.

at<

float>(i, 2) * frame.cols;

float height = detectionMat.

at<

float>(i, 3) * frame.rows;

Scalar object_roi_color(0, 255, 0);

if (object_roi_style == "box")

{

}

else

{

line(frame,

object.tl(), p_center, object_roi_color, 1);

}

String className = objectClass < classNamesVec.size() ? classNamesVec[objectClass] : cv::format(

"unknown(%d)", objectClass);

String label = format(

"%s: %.2f", className.

c_str(), confidence);

int baseLine = 0;

}

}

{

}

imshow(

"YOLO: Detections", frame);

}

return 0;

}

1.8.12

1.8.12